Latent HSJA

Unrestricted Black-box Adversarial Attack Using GAN with Limited Queries

Authors

- Dongbin Na, Sangwoo Ji, Jong Kim

- Pohang University of Science and Technology (POSTECH), Pohang, South Korea

Abstract

Adversarial examples are inputs intentionally generated for fooling a deep neural network. Recent studies have proposed unrestricted adversarial attacks that are not norm-constrained. However, the previous unrestricted attack methods still have limitations to fool real-world applications in a black-box setting.

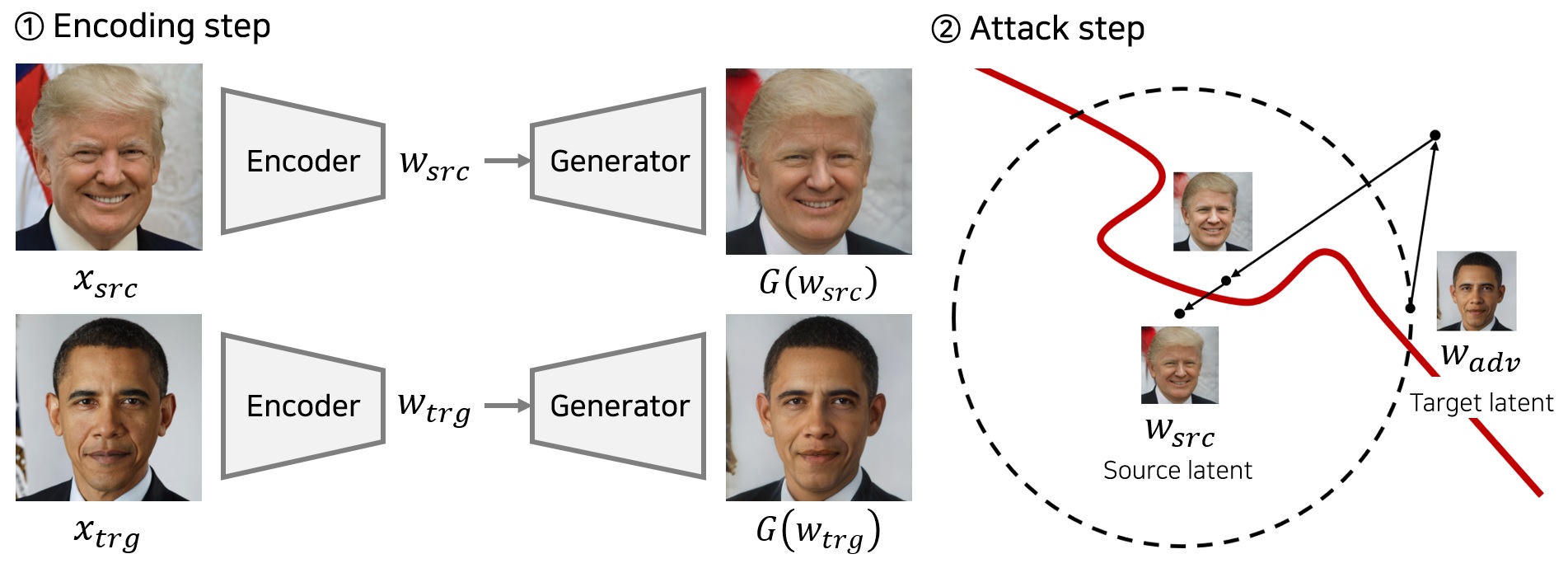

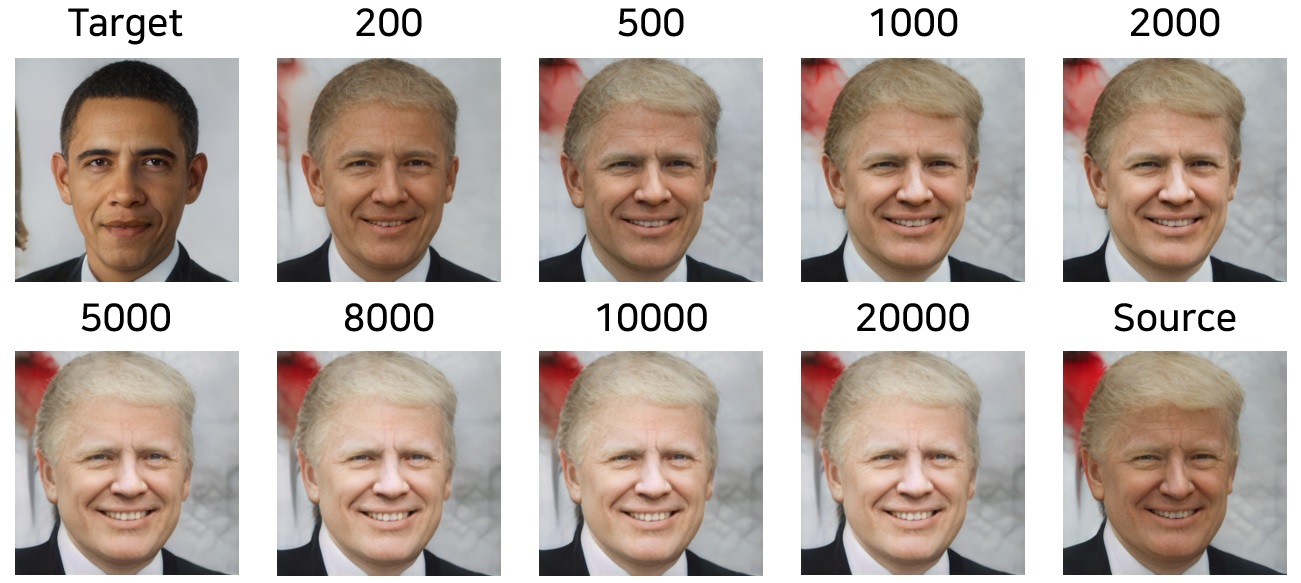

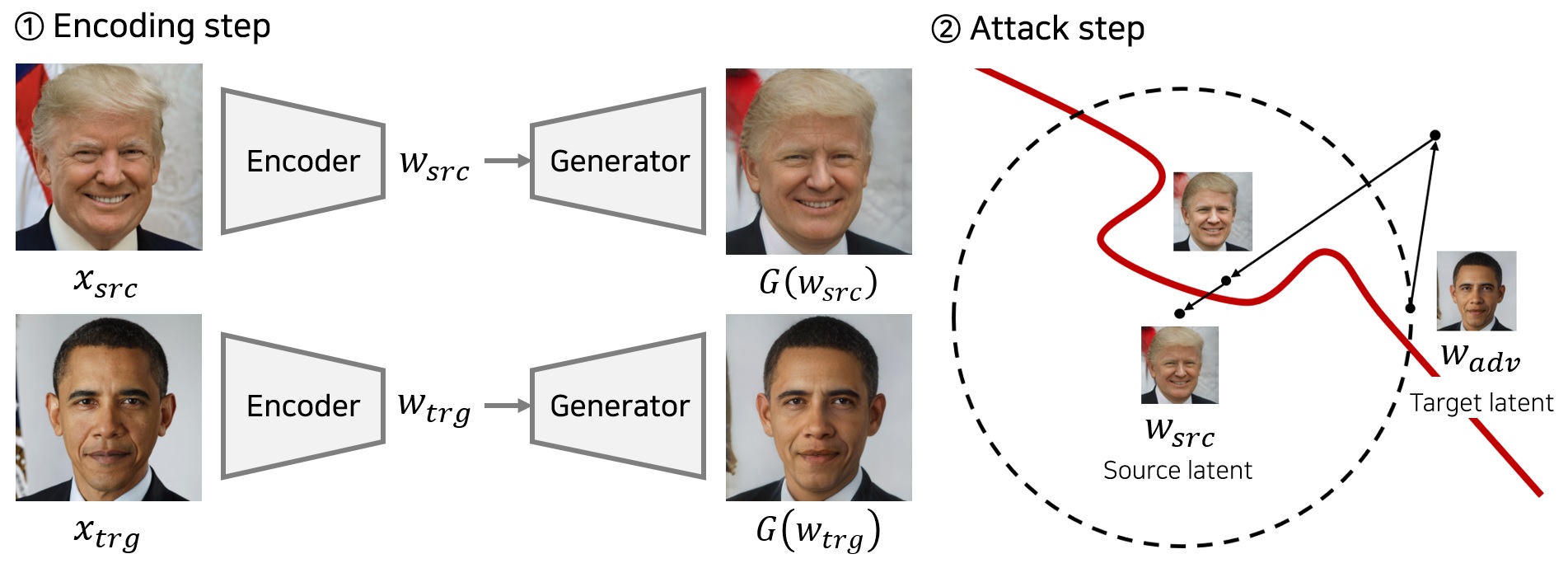

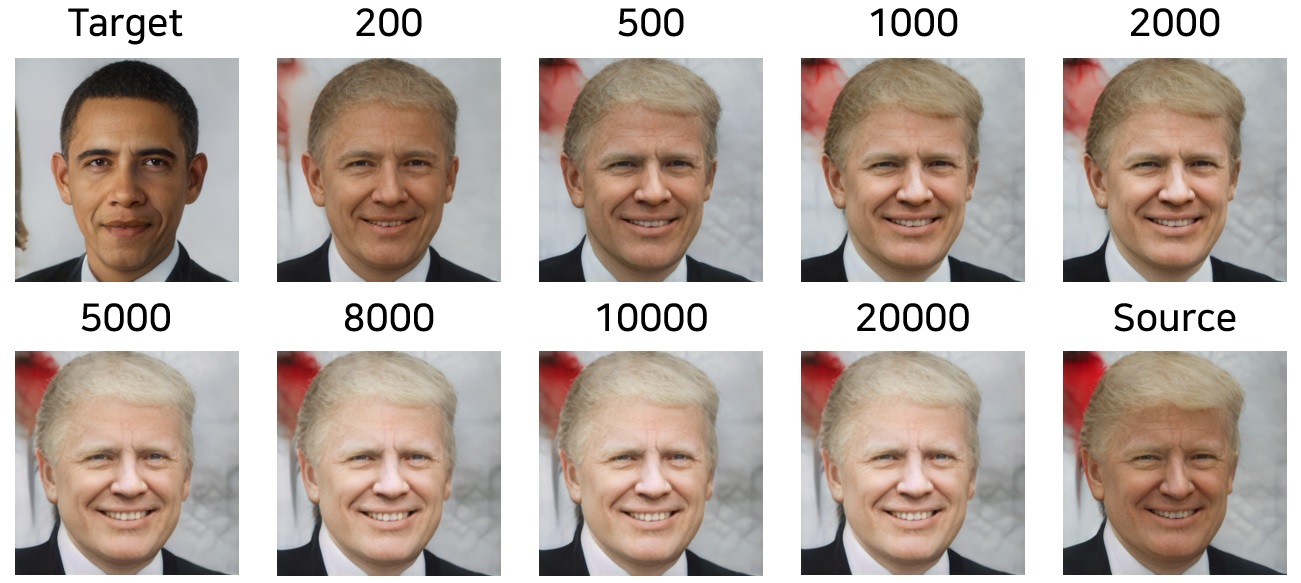

In this paper, we present a novel method for generating unrestricted adversarial examples using GAN where an attacker can only access the top-1 final decision of a classification model. Our method, Latent-HSJA, efficiently leverages the advantages of a decision-based attack in the latent space and successfully manipulates the latent vectors for fooling the classification model.

Demonstration

Source Codes

Datasets

- All datasets are based on the CelebAMask-HQ dataset.

- The original dataset contains 6,217 identities.

- The original dataset contains 30,000 face images.

1. Celeb-HQ Facial Identity Recognition Dataset

- This dataset is curated for the facial identity classification task.

- There are 307 identities (celebrities).

- Each identity has 15 or more images.

- The dataset contains 5,478 images.

- There are 4,263 train images.

- There are 1,215 test images.

- Dataset download link (527MB)

Dataset/

train/

identity 1/

identity 2/

...

test/

identity 1/

identity 2/

...

2. Celeb-HQ Face Gender Recognition Dataset

Dataset/

train/

male/

female/

test/

male/

female/

Classification Models to Attack

- The size of input images is 256 X 256 resolution (normalization 0.5).

Generate Datasets for Experiments with Encoding Networks

- For this work, we utilize the pixel2style2pixel (pSp) encoder network.

- E(ꞏ) denotes the pSp encoding method that maps an image into a latent vector.

- G(ꞏ) is the StyleGAN2 model that generates an image given a latent vector.

- F(ꞏ) is a classification model that returns a predicted label.

- We can validate the performance of a encoding method given a dataset that contains (image x, label y) pairs.

- Consistency accuracy denotes the ratio of test images such that F(G(E(x))) = F(x) over all test images.

- Correctly consistency accuracy denotes the ratio of test images such that F(G(E(x))) = F(x) and F(x) = y over all test images.

- We can generate a dataset in which images are correctly consistent.

|

SIM |

LPIPS |

Consistency acc. |

Correctly consistency acc. |

|

| Identity Recognition |

0.6465 |

0.1504 |

70.78% |

67.00% |

(code | dataset) |

| Gender Recognition |

0.6355 |

0.1625 |

98.30% |

96.80% |

(code | dataset) |

Training the Original Classification Models

Citation

If this work can be useful for your research, please cite our paper:

@inproceedings{na2022unrestricted,

title={Unrestricted Black-Box Adversarial Attack Using GAN with Limited Queries},

author={Na, Dongbin and Ji, Sangwoo and Kim, Jong},

booktitle={European Conference on Computer Vision},

pages={467--482},

year={2022},

organization={Springer}

}